Please use a sample size of 95:5 for training: validation sets, i.e. You can split the Treebank dataset into train and validation sets. Note that using only 12 coarse classes (compared to the 46 fine classes such as NNP, VBD etc.) will make the Viterbi algorithm faster as well. The Universal tagset of NLTK comprises only 12 coarse tag classes as follows: Verb, Noun, Pronouns, Adjectives, Adverbs, Adpositions, Conjunctions, Determiners, Cardinal Numbers, Particles, Other/ Foreign words, Punctuations. Why does the Viterbi algorithm choose a random tag on encountering an unknown word? Can you modify the Viterbi algorithm so that it considers only one of the transition or emission probabilities for unknown words?įor this assignment, you’ll use the Treebank dataset of NLTK with the 'universal' tagset.based on morphological cues) that can be used to tag unknown words? You may define separate python functions to exploit these rules so that they work in tandem with the original Viterbi algorithm.

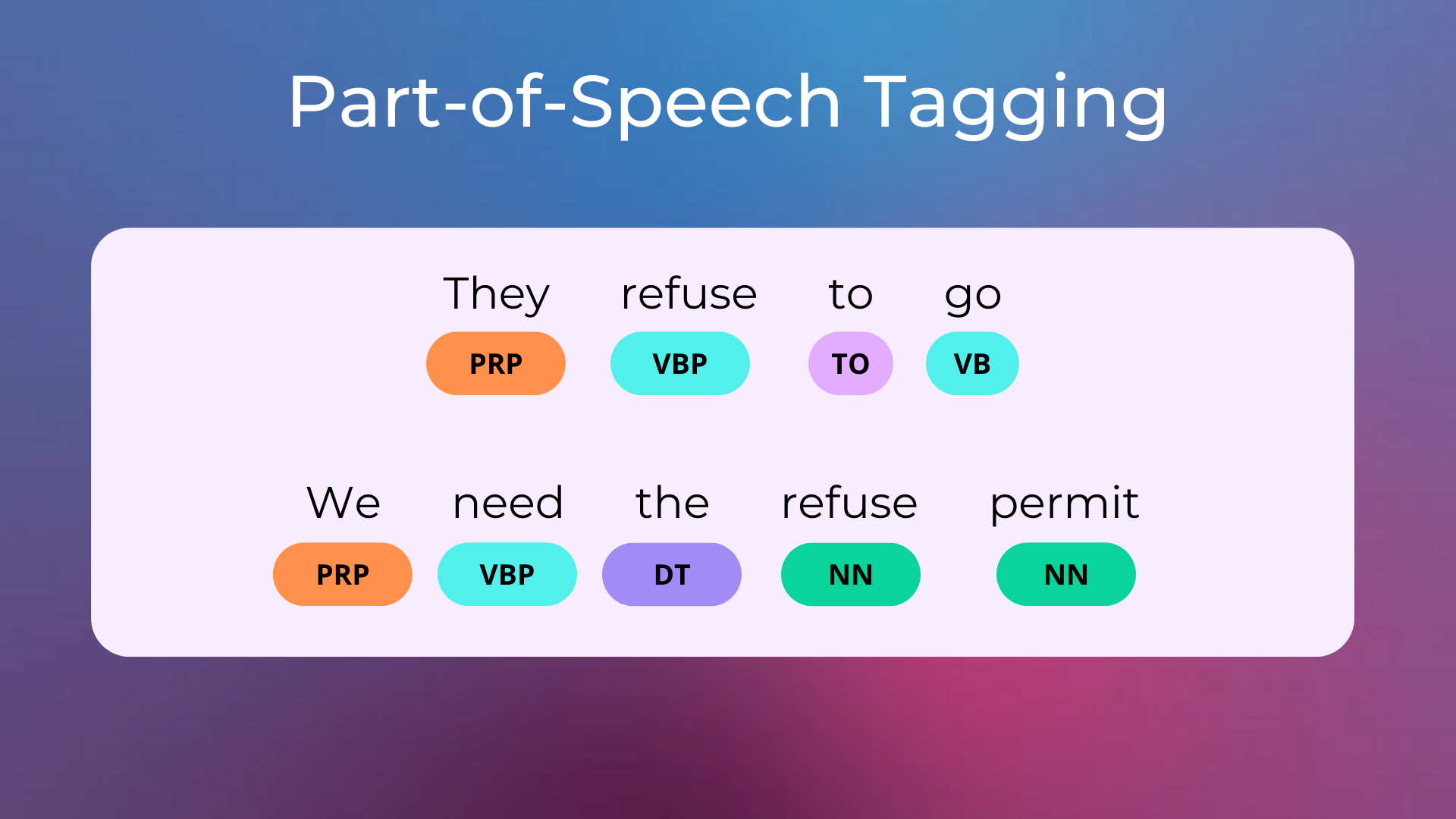

Which tag class do you think most unknown words belong to? Can you identify rules (e.g.Though there could be multiple ways to solve this problem, you may use the following hints: In this assignment, you need to modify the Viterbi algorithm to solve the problem of unknown words using at least two techniques. This is because, for unknown words, the emission probabilities for all candidate tags are 0, so the algorithm arbitrarily chooses (the first) tag. not present in the training set, such as 'Twitter'), it assigned an incorrect tag arbitrarily. 13% loss of accuracy was majorly due to the fact that when the algorithm encountered an unknown word (i.e. The vanilla Viterbi algorithm we had written had resulted in ~87% accuracy. You have learnt to build your own HMM-based POS tagger and implement the Viterbi algorithm using the Penn Treebank training corpus. HMMs and Viterbi algorithm for POS tagging

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed